What is MapReduce?

A MapReduce is a data processing tool which is used to process the data parallelly in a distributed form. It was developed in 2004, on the basis of paper titled as "MapReduce: Simplified Data Processing on Large Clusters," published by Google.The MapReduce is a paradigm which has two phases, the mapper phase, and the reducer phase. In the Mapper, the input is given in the form of a key-value pair. The output of the Mapper is fed to the reducer as input. The reducer runs only after the Mapper is over. The reducer too takes input in key-value format, and the output of reducer is the final output.To more info visit:big data training

Steps in Map Reduce

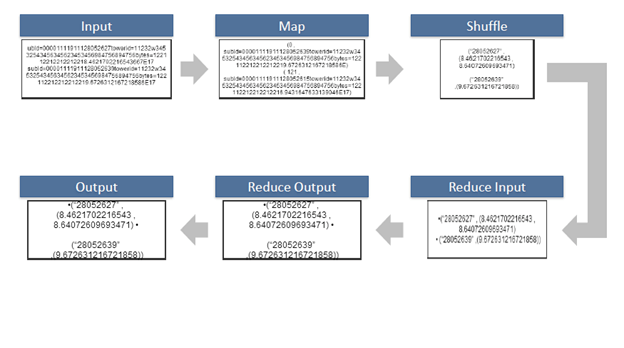

- The map takes data in the form of pairs and returns a list of <key, value> pairs. The keys will not be unique in this case.

- Using the output of Map, sort and shuffle are applied by the Hadoop architecture. This sort and shuffle acts on these list of <key, value> pairs and sends out unique keys and a list of values associated with this unique key <key, list(values)>.

- An output of sort and shuffle sent to the reducer phase. The reducer performs a defined function on a list of values for unique keys, and Final output <key, value> will be stored/displayed.

Sort and Shuffle

The sort and shuffle occur on the output of Mapper and before the reducer. When the Mapper task is complete, the results are sorted by key, partitioned if there are multiple reducers, and then written to disk. Using the input from each Mapper <k2,v2>, we collect all the values for each unique key k2. This output from the shuffle phase in the form of <k2, list(v2)> is sent as input to reducer phase.Usage of MapReduce

- It can be used in various application like document clustering, distributed sorting, and web link-graph reversal.

- It can be used for distributed pattern-based searching.

- We can also use MapReduce in machine learning.

- It was used by Google to regenerate Google's index of the World Wide Web.

- It can be used in multiple computing environments such as multi-cluster, multi-core, and mobile environment.

Prerequisite

Before learning MapReduce, you must have the basic knowledge of Big Data.Audience

Our MapReduce tutorial is designed to help beginners and professionals.Problem

We assure that you will not find any problem in this MapReduce tutorial. But if there is any mistake, please post the problem in contact form.Data Flow In MapReduce

MapReduce is used to compute the huge amount of data . To handle the upcoming data in a parallel and distributed form, the data has to flow from various phases.

Phases of MapReduce data flow

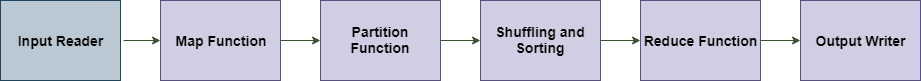

Input reader

The input reader reads the upcoming data and splits it into the data blocks of the appropriate size (64 MB to 128 MB). Each data block is associated with a Map function.Once input reads the data, it generates the corresponding key-value pairs. The input files reside in HDFS.

Map function

The map function process the upcoming key-value pairs and generated the corresponding output key-value pairs. The map input and output type may be different from each other.Partition function

The partition function assigns the output of each Map function to the appropriate reducer. The available key and value provide this function. It returns the index of reducers.Shuffling and Sorting

The data are shuffled between/within nodes so that it moves out from the map and get ready to process for reduce function. Sometimes, the shuffling of data can take much computation time.The sorting operation is performed on input data for Reduce function. Here, the data is compared using comparison function and arranged in a sorted form.

Reduce function

The Reduce function is assigned to each unique key. These keys are already arranged in sorted order. The values associated with the keys can iterate the Reduce and generates the corresponding output.Output writer

Once the data flow from all the above phases, Output writer executes. The role of Output writer is to write the Reduce output to the stable storage.MapReduce API

In this section, we focus on MapReduce APIs. Here, we learn about the classes and methods used in MapReduce programming.

MapReduce Mapper Class

In MapReduce, the role of the Mapper class is to map the input key-value pairs to a set of intermediate key-value pairs. It transforms the input records into intermediate records.These intermediate records associated with a given output key and passed to Reducer for the final output.

MapReduce Word Count Example

In MapReduce word count example, we find out the frequency of each word. Here, the role of Mapper is to map the keys to the existing values and the role of Reducer is to aggregate the keys of common values. So, everything is represented in the form of Key-value pair.If you are intrested please visit:big data hadoop online training

Pre-requisite

- Java Installation - Check whether the Java is installed or not using the following command.

- java -version

- Hadoop Installation - Check whether the Hadoop is installed or not using the following command.

- hadoop version

In MapReduce word count example, we find out the frequency of each word. Here, the role of Mapper is to map the keys to the existing values and the role of Reducer is to aggregate the keys of common values. So, everything is represented in the form of Key-value pair.

Pre-requisite

- Java Installation - Check whether the Java is installed or not using the following command.

- java -version

- Hadoop Installation - Check whether the Hadoop is installed or not using the following command.

- hadoop version

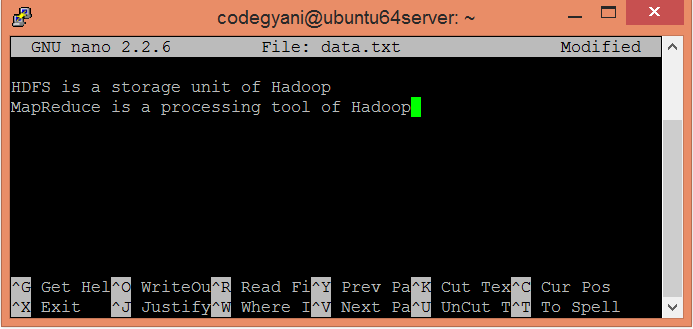

Steps to execute MapReduce word count example

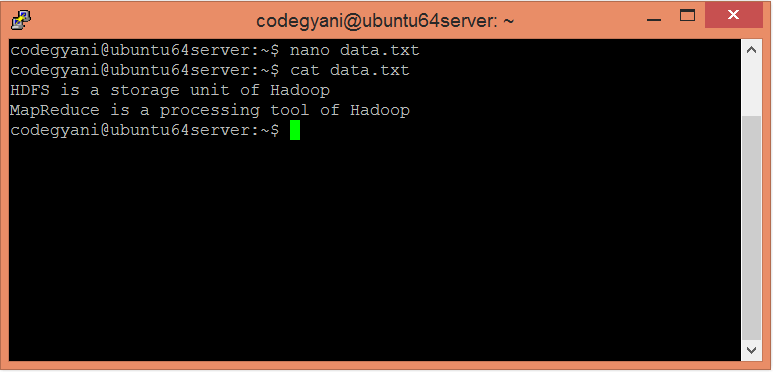

- Create a text file in your local machine and write some text into it.

- $ nano data.txt

- Check the text written in the data.txt file.

- $ cat data.txt

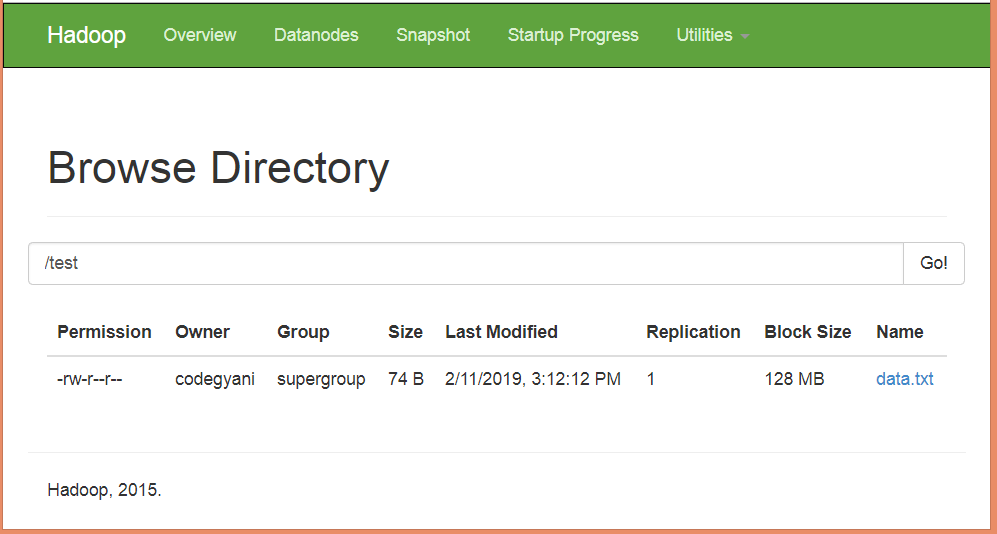

- Create a directory in HDFS, where to kept text file.

- $ hdfs dfs -mkdir /test

- Upload the data.txt file on HDFS in the specific directory.

- $ hdfs dfs -put /home/codegyani/data.txt /test

- Write the MapReduce program using eclipse.

File: WC_Mapper.java

- package com.javatpoint;

- import java.io.IOException;

- import java.util.StringTokenizer;

- import org.apache.hadoop.io.IntWritable;

- import org.apache.hadoop.io.LongWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapred.MapReduceBase;

- import org.apache.hadoop.mapred.Mapper;

- import org.apache.hadoop.mapred.OutputCollector;

- import org.apache.hadoop.mapred.Reporter;

- public class WC_Mapper extends MapReduceBase implements Mapper<LongWritable,Text,Text,IntWritable>{

- private final static IntWritable one = new IntWritable(1);

- private Text word = new Text();

- public void map(LongWritable key, Text value,OutputCollector<Text,IntWritable> output,

- Reporter reporter) throws IOException{

- String line = value.toString();

- StringTokenizer tokenizer = new StringTokenizer(line);

- while (tokenizer.hasMoreTokens()){

- word.set(tokenizer.nextToken());

- output.collect(word, one);

- }

- }

- }

File: WC_Reducer.java

- package com.javatpoint;

- import java.io.IOException;

- import java.util.Iterator;

- import org.apache.hadoop.io.IntWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapred.MapReduceBase;

- import org.apache.hadoop.mapred.OutputCollector;

- import org.apache.hadoop.mapred.Reducer;

- import org.apache.hadoop.mapred.Reporter;

- public class WC_Reducer extends MapReduceBase implements Reducer<Text,IntWritable,Text,IntWritable> {

- public void reduce(Text key, Iterator<IntWritable> values,OutputCollector<Text,IntWritable> output,

- Reporter reporter) throws IOException {

- int sum=0;

- while (values.hasNext()) {

- sum+=values.next().get();

- }

- output.collect(key,new IntWritable(sum));

- }

- }

File: WC_Runner.java

- package com.javatpoint;

- import java.io.IOException;

- import org.apache.hadoop.fs.Path;

- import org.apache.hadoop.io.IntWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapred.FileInputFormat;

- import org.apache.hadoop.mapred.FileOutputFormat;

- import org.apache.hadoop.mapred.JobClient;

- import org.apache.hadoop.mapred.JobConf;

- import org.apache.hadoop.mapred.TextInputFormat;

- import org.apache.hadoop.mapred.TextOutputFormat;

- public class WC_Runner {

- public static void main(String[] args) throws IOException{

- JobConf conf = new JobConf(WC_Runner.class);

- conf.setJobName("WordCount");

- conf.setOutputKeyClass(Text.class);

- conf.setOutputValueClass(IntWritable.class);

- conf.setMapperClass(WC_Mapper.class);

- conf.setCombinerClass(WC_Reducer.class);

- conf.setReducerClass(WC_Reducer.class);

- conf.setInputFormat(TextInputFormat.class);

- conf.setOutputFormat(TextOutputFormat.class);

- FileInputFormat.setInputPaths(conf,new Path(args[0]));

- FileOutputFormat.setOutputPath(conf,new Path(args[1]));

- JobClient.runJob(conf);

- }

- }

Download the source code.

- Create the jar file of this program and name it countworddemo.jar.

- Run the jar file

- hadoop jar /home/codegyani/wordcountdemo.jar com.javatpoint.WC_Runner /test/data.txt /r_output

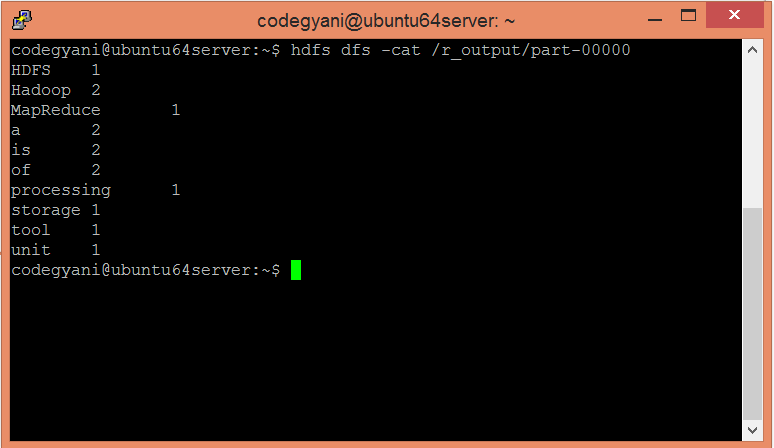

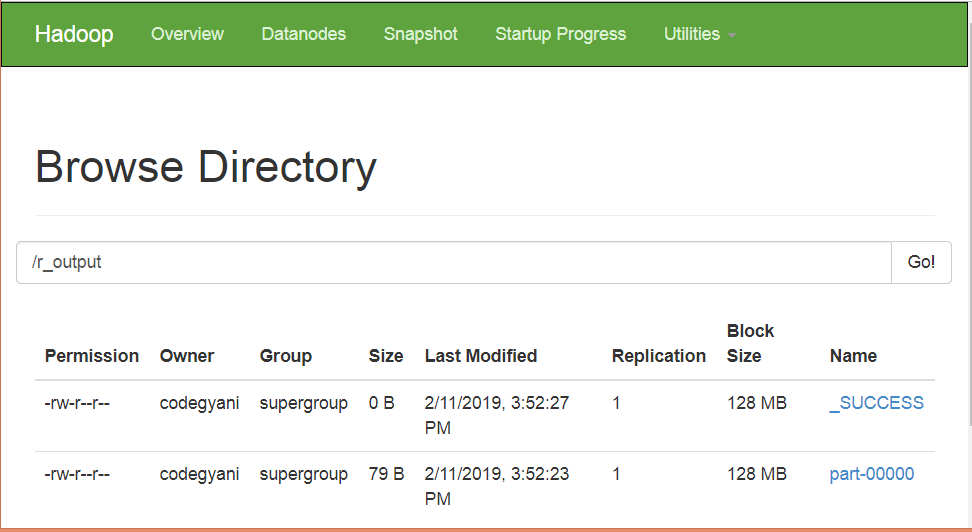

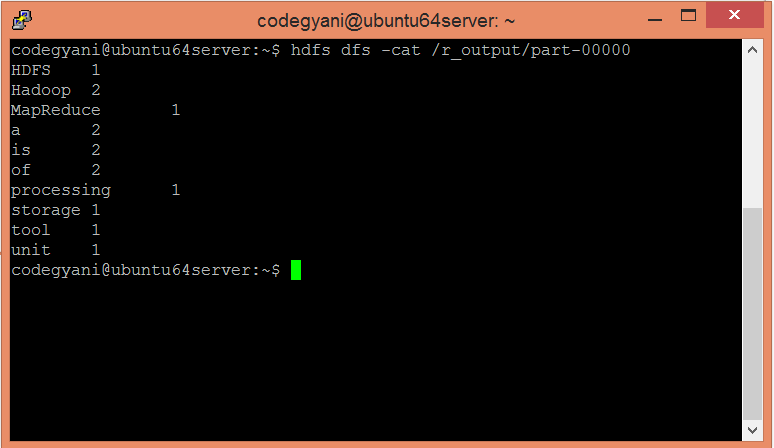

- The output is stored in /r_output/part-00000

- Now execute the command to see the output.

- hdfs dfs -cat /r_output/part-00000

No comments:

Post a Comment